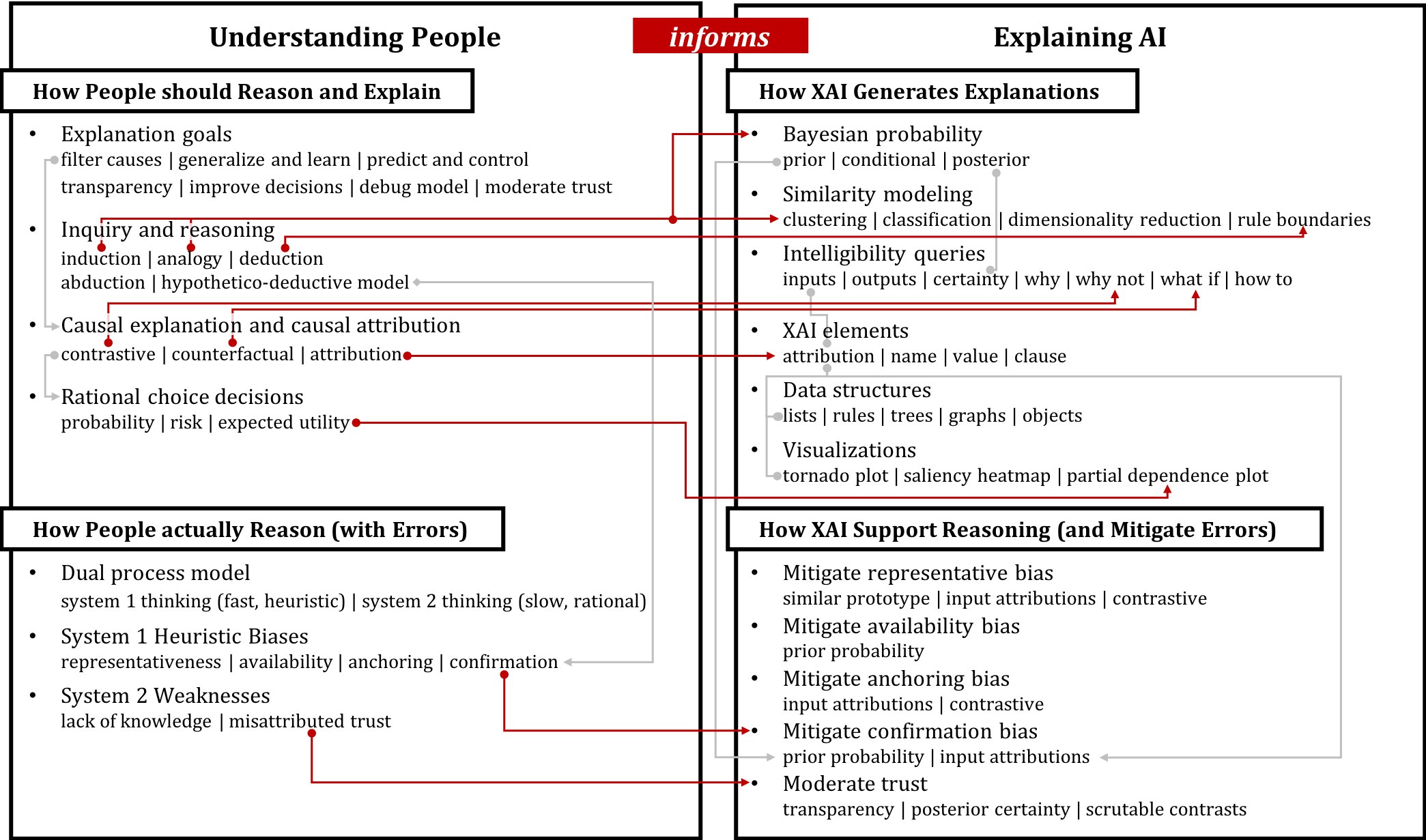

Which explanations should AI provide? We identified pathways to tailor explanation techniques based on theories of human reasoning processes.

From healthcare to criminal justice, artificial intelligence (AI) is increasingly supporting high-consequence human decisions. This has spurred the field of explainable AI (XAI). This paper seeks to strengthen empirical application-specific investigations of XAI by exploring theoretical underpinnings of human decision making, drawing from the fields of philosophy and psychology. In this paper, we propose a conceptual framework for building human-centered, decision-theory-driven XAI based on an extensive review across these fields. Drawing on this framework, we identify pathways along which human cognitive patterns drives needs for building XAI and how XAI can mitigate common cognitive biases. We then put this framework into practice by designing and implementing an explainable clinical diagnostic tool for intensive care phenotyping and conducting a co-design exercise with clinicians. Hence, we drew initial insights into how this framework bridges algorithm-generated explanations and human decision-making theories. Finally, we discuss its implications for future generalizable XAI design and development.

Paper:

- Wang, D., Yang, Q., Abdul, A., Lim, B. Y. 2019. Designing Theory-Driven User-Centric Explainable AI. In Proceedings of the international Conference on Human Factors in Computing Systems. CHI ’19.