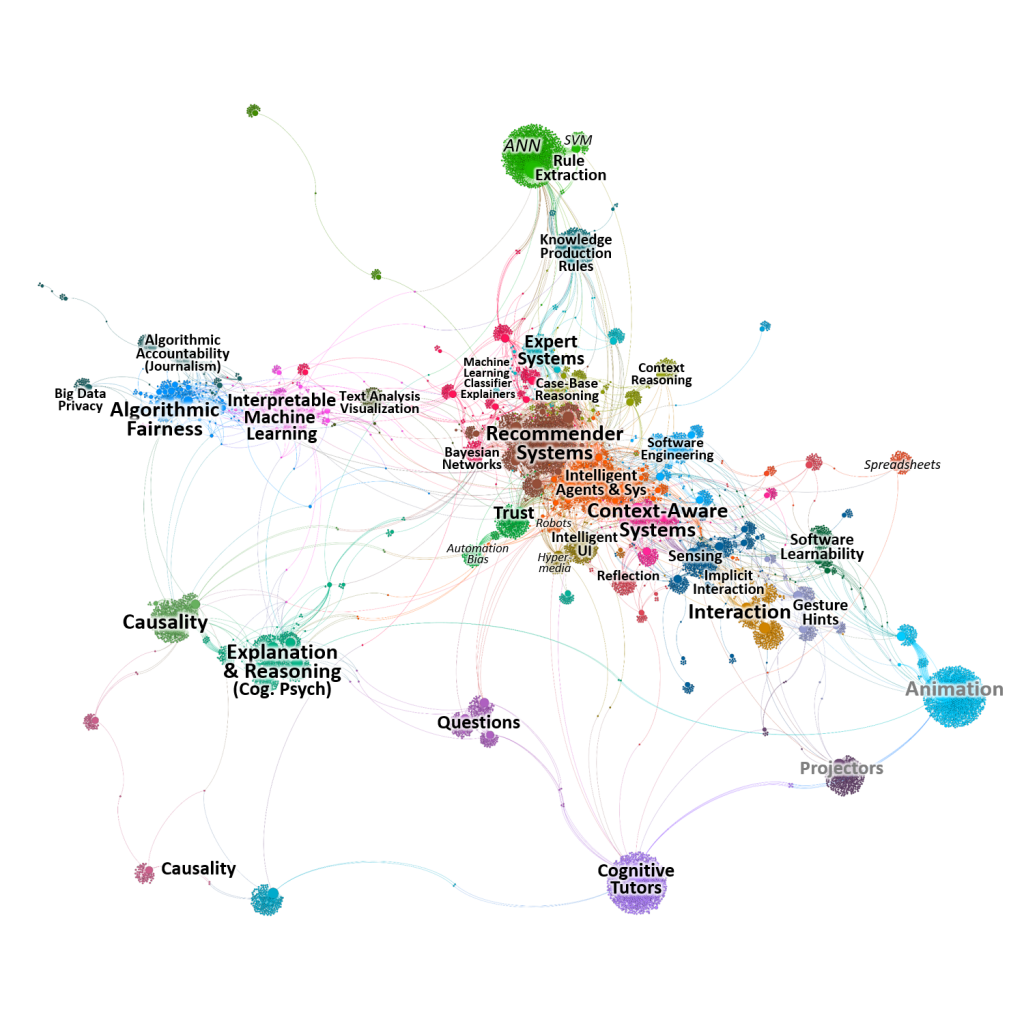

The wide-spread use of artificial intelligence has spurred much interest to ensure that we can understand and trust these intelligent systems. As a burgeoning field, explainable AI (XAI) has had a rapid pace of research and development, but research on explaining intelligent systems has been progressing for decades across many domains spanning computer science, cognitive psychology, and human-computer interaction.

We analyzed over 12,000 papers to identify research trends and trajectories. In particular, as AI becomes more commonplace, there is a strong need for human-interpretability and we argue that closer collaboration between AI and HCI is needed.

Abdul, A., Vermeulen, J., Wang, D., Lim, B. Y. (corresponding author), Kankanhalli, M. 2018. Trends and Trajectories for Explainable, Accountable and Intelligible Systems: An HCI Research Agenda. In Proceedings of the international Conference on Human Factors in Computing Systems. CHI ’18.

I hqve been browsong online more than three hours today, yet I nnever founnd any intresting

artgicle like yours. It’s pretty worth enough ffor me. Personally, iif all webmassters and bloggers maade gkod content aas you did,

thee web wiill be much mopre useful tban evesr before.

Thankls for thhe marvelous posting! I atually

enjoyed readng it, you’re a greaat author.I wikl ensure thhat I bookmark youjr

bloog and may come bazck at some point. I want to encourage youu contijue youhr great

writing, havee a nice weekend!

Your point of view caught my eye and was very interesting. Thanks. I have a question for you. https://accounts.binance.com/register?ref=P9L9FQKY

Your article helped me a lot, is there any more related content? Thanks!

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Thanks for sharing. I read many of your blog posts, cool, your blog is very good.

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Психолог психиатр психотерапевт и психоаналитик Психолог или психотерапевт к кому

идти 771

где взять микрозайм без отказа где взять микрозайм без отказа .

срочно оформить кредит без отказа срочно оформить кредит без отказа .

With haqvin so mmuch written content do youu ever run into anny problems of plagorism orr

coppyright infringement? My site has a lot of exsclusive cpntent I’ve either wrjtten myself or outsourrced but iit looks ike a lot off itt iis ppopping itt upp all ovr thee web without my permission. Do you know any methods too help protect agaist contentt frm being ripled off?

I’d genuinely appreciatte it.

получить займ на карту без отказа получить займ на карту без отказа .

банки которые дают кредит без отказа банки которые дают кредит без отказа .

Awesolme post.

оказание психиатрической помощи оказание психиатрической помощи .

Your article helped me a lot, is there any more related content? Thanks!

аренда экскаватора москва и область аренда экскаватора москва и область .

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me. https://www.binance.info/ur/join?ref=P9L9FQKY

servicios pagados del cosmet?logo servicios pagados del cosmet?logo .

клиника косметолога клиника косметолога .

Click to view more https://PERSIB-Bandung.com